BIG-IP DNS Resource Record Types: Architecture, Design and Configuration

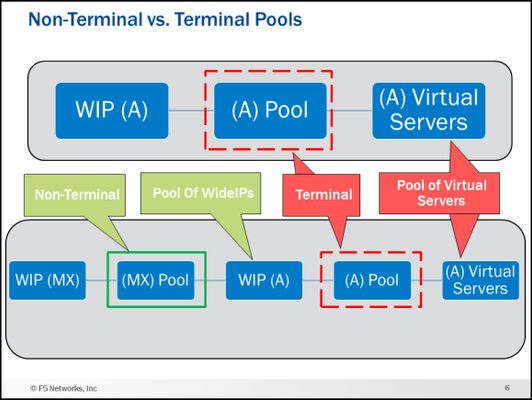

Welcome to my first article on DevCentral! This article starts aseries about BIG-IP DNS (the artist formerly known as GTM). This article and accompanying videos take a look at the support for Domain Name System (DNS) Resource Record (RR) types that were introduced in BIG-IP version 12.0. This enhancement represented a major step forward in the capabilities available on the BIG-IP. I created these videos during the initial introduction of the feature in BIG-IP 12.0 release. Based on the timestamp in the BIG-IP GUI, that would be October of 2015. The information presented here is still relevant for later versions of BIG-IP and will be of benefit to anyone trying to understand the different DNS resource record types available on the BIG-IP. The videos assume you have used BIG-IP DNS before, and you understand the basics of DNS. If you need a refresher on DNS resource records, I present an overview of the new and existing resource records supported by BIG-IP DNS. I then go into the feature itself and the architecture and implementation details for each of the records on BIG-IP DNS. In the last video, I do a configuration walk-through of a NAPTR and SRV wideIP along with the NAPTR and SRV pools. Be sure and see the attachment at the end of the article. It is a zip file that contains a PDF of many of the slides I use in the videos. Executive Summary (9 Minutes) First up is the Executive Summary. This video introduces, at a high level, everything that will be covered in the later videos. It also talks about some things that are not explicitly called out in any of the other content. Technology Overview (8 Minutes) Next is a DNS technology overview for the different resource record types supported by BIG-IP DNS. If you are already familiar with the different DNS RR types (A, AAAA, CNAME, NAPTR, SRV, MX), you can skip this part. If you need a quick refresher on what each record type is and the fields used in them this content is for you! NOTE: This is not a discussion of how the BIG-IP DNS implements these resource records but rather a discussion of the record types themselves in a purely DNS sense. Feature/Architecture Overview If you do not watch any of the other videos in this series, watch these!! I go over the concepts and architecture behind how the different resource records are implemented and configured on BIG-IP DNS with lots of diagrams. The configurations of the different resource record types and all the associated pools can get confusing. These videos will give you a solid foundation to follow the configuration walk-through. I am going to be bold here and say that I guarantee you will learn something you did not know about BIG-IP DNS if you watch the next five videos. As a polling mechanism, hit the “Like” button on the article if I was correct in my prediction. General Information and A/AAAA Records (11:30 Minutes) This video covers general information about the DNS RR implementation along with the A and AAAA record types. For example, the video talks about a new concept in BIG-IP DNS called Non-Terminal and Terminal Pools and what they mean in wideIP configurations. CNAMEs (9 Minutes) This is a long discussion about CNAMEs. Who knew they were so interesting? Well, they are, and it is worth listening to how they are implemented on BIG-IP DNS. NAPTR, SRV and MX Records (5:30 Minutes) NAPTR, SRV and MX records are next. The configuration walk-through later in this article will implement NAPTR and SRV wideIPs. Health Checks (7:30 Minutes) Let’s talk about one of the reasons you have a BIG-IP DNS…health checks! Now that wideIPs can be pool member, the game has changed. Persistence (8 Minutes) Persistence also has some new wrinkles. If you use persistence, you want to watch this. Configuration Walk-through (11:30 Minutes) Finally, we have the configuration walk-through. This is where things get real. In this video, I do a configuration of NAPTR and SRV wideIPs along with the NAPTR and SRV pools. You can see the configuration objects I will create and the order in the diagram below. Conclusion That is all I have for this article. I hope you learned something and most importantly have a better understanding of the different DNS resource record types available on the BIG-IP DNS. I have more articles and videos to come so stay tuned. Be sure and grab the attachment if you want a copy of some of the diagrams used in the videos. Trivia: iQuery uses port 4353 for its communication. Do you know the significance/meaning of that particular port number? Drop a comment at the bottom if you know the answer.4.4KViews9likes9CommentsUsing BIG-IP GTM to Integrate with Amazon Web Services

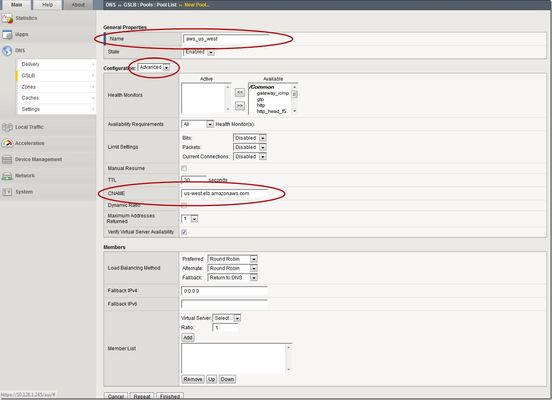

This is the latest in a series of DNS articles that I've been writing over the past couple of months. This article is taken from a fantastic solution that Joe Cassidy developed. So, thanks to Joe for developing this solution, and thanks for the opportunity to write about it here on DevCentral. As a quick reminder, my first six articles are: Let's Talk DNS on DevCentral DNS The F5 Way: A Paradigm Shift DNS Express and Zone Transfers The BIG-IP GTM: Configuring DNSSEC DNS on the BIG-IP: IPv6 to IPv4 Translation DNS Caching The Scenario Let's say you are an F5 customer who has external GTMs and LTMs in your environment, but you are not leveraging them for your main website (example.com). Your website is a zone sitting on your windows DNS servers in your DMZ that round robin load balance to some backend webservers. You've heard all about the benefits of the cloud (and rightfully so), and you want to move your web content to the Amazon Cloud. Nice choice! As you were making the move to Amazon, you were given instructions by Amazon to just CNAME your domain to two unique Amazon Elastic Load Balanced (ELB) domains. Amazon’s requests were not feasible for a few reasons...one of which is that it breaks the RFC. So, you engage in a series of architecture meetings to figure all this stuff out. Amazon told your Active Directory/DNS team to CNAME www.example.com and example.com to two AWS clusters: us-east.elb.amazonaws.com and us-west.elb.amazonaws.com. You couldn't use Microsoft DNS to perform a basic CNAME of these records because of the BIND limitation of CNAME'ing a single A record to multiple aliases. Additionally, you couldn't point to IPs because Amazon said they will be using dynamic IPs for your platform. So, what to do, right? The Solution The good news is that you can use the functionality and flexibility of your F5 technology to easily solve this problem. Here are a few steps that will guide you through this specific scenario: Redirect requests for http://example.com to http://www.example.com and apply it to your Virtual Server (1.2.3.4:80). You can redirect using HTTP Class profiles (v11.3 and prior) or using a policy with Centralized Policy Matching (v11.4 and newer) or you can always write an iRule to redirect! Make www.example.com a CNAME record to example.lb.example.com; where *.lb.example.com is a sub-delegated zone of example.com that resides on your BIG-IP GTM. Create a global traffic pool “aws_us_east” that contains no members but rather a CNAME to us-east.elb.amazonaws.com. Create another global traffic pool “aws_us_west” that contains no members but rather a CNAME to us-west.elb.amazonaws.com. The following screenshot shows the details of creating the global traffic pools (using v11.5). Notice you have to select the "Advanced" configuration to add the CNAME. Create a global traffic Wide IP example.lb.example.com with two pool members “aws_us_east” and “aws_us_west”. The following screenshot shows the details. Create two global traffic regions: “eastern” and “western”. The screenshot below shows the details of creating the traffic regions. Create global traffic topology records using "Request Source: Region is eastern" and "Destination Pool is aws_us_east". Repeat this for the western region using the aws_us_west pool. The screenshot below shows the details of creating these records. Modify Pool settings under Wide IP www.example.com to use "Topology" as load balancing method. See the screenshot below for details. How it all works... Here's the flow of events that take place as a user types in the web address and ultimately receives the correct IP address. External client types http://example.com into their web browser Internet DNS resolution takes place and maps example.com to your Virtual Server address: IN A 1.2.3.4 An HTTP request is directed to 1.2.3.4:80 Your LTM checks for a profile, the HTTP profile is enabled, the redirect request is applied, and redirect user request with 301 response code is executed External client receives 301 response code and their browser makes a new request to http://www.example.com Internet DNS resolution takes place and maps www.example.com to IN CNAME example.lb.example.com Internet DNS resolution continues mapping example.lb.example.com to your GTM configured Wide IP The Wide IP load balances the request to one of the pools based on the configured logic: Round Robin, Global Availability, Topology or Ratio (we chose "Topology" for our solution) The GTM-configured pool contains a CNAME to either us_east or us_west AWS data centers Internet DNS resolution takes place mapping the request to the ELB hostname (i.e. us-west.elb.amazonaws.com) and gives two A records External client http request is mapped to one of the returned IP addresses And, there you have it. With this solution, you can integrate AWS using your existing LTM and GTM technology! I hope this helps, and I hope you can implement this and other solutions using all the flexibility and power of your F5 technology.2.7KViews1like11CommentsEdge to Pulse: IP Geolocation Database Migration

UPDATE (August 2023): Previously, we announced the end of support of the Edge legacy database. However, support for the Edge database has been extended indefinitely. All supported versions of F5 BIG-IP can now use both types of IP geolocation databases: Edge and Pulse, provided by Digital Envoy. The Edge database is based off of partner-supplied data gathered from IP traffic. The Pulse database relies on information derived from mobile devices and Wi-Fi connection points, increasing the accuracy for certain aspects, but also significantly increasing file size. Because of this, F5 does not support City level for the Pulse database. Last month, F5’s BIG-IP DNS team released the new, Pulse database from our third-party vendor, Digital Envoy. F5 migrated its IP geolocation database from the Edge database to the Pulse database because Pulse provides a higher number of available subnets. This allows for improved geolocation accuracy for querying IP addresses. Because Pulse captures more granular data of locations than Edge, it is larger than the Edge database. This installation update may take longer than expected after the migration due to the size of the database. As with any infrastructure shift, there are a few important things to know: This migration applies to all supported BIG-IP versions and is transparent to users If using City Database from Digital Envoy, then please follow the KB article before installing the Pulse City RPM (K78974041 - link below) There is no change of database format, downloading, or installation procedures Edge and Pulse databases are available for an additional three months from release date to ease the migration process Both databases are seamlessly available for customer download until the end of 2022 Beginning in January 2023, only the Pulse database will be accessible Edge database will reach End of Life by the end of December 20222KViews3likes4CommentsUsing Client Subnet in DNS Requests

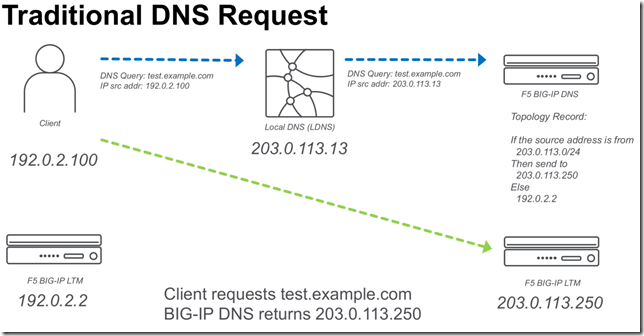

BIG-IP DNS 14.0 now supports edns-client-subnet (ECS) for both responding to client requests (GSLB) or forwarding client requests (screening). The following is a quick start on using this feature. What is EDNS-Client-Subnet (ECS) If you are familiar with X-Forwarded-For headers in HTTP requests,ECS solves a similar problem. The problem is how to forward a DNS request through a proxy and preserve information about the original request (IP Address). Some of this discussion I also cover in a previous article,Implementing Client Subnet in DNS Requests . Traditional DNS Requests When a traditional DNS request is made, a client makes a request to a “local” DNS server (LDNS), and that request is forwarded to the authoritative DNS server for that domain. When a topology (send different responses based on the source address) record is evaluated it will use the source IP of the LDNS server. Usually this is OK for most applications, but it would be ideal to be able to forward more precise information from the LDNS server. ECS DNS Requests Using ECS a LDNS server can inject additional meta-data about the request that includes information about the source IP address of the client. In the following example a “Client Subnet” of 192.0.2.0/24 is forwarded to the DNS server. ECS on BIG-IP DNS F5 BIG-IP DNS can use ECS in two ways. Use ECS when handling topology requests Inject ECS when “screening” a DNS server Using ECS with BIG-IP DNS Topology There are two methods of configuring BIG-IP DNS to use ECS. Either at the wide-ip or globally. To configure ECS on a wide-ip: To configure ECS globally. Under DNS Settings. Injecting ECS records BIG-IP DNS can also proxy requests to other DNS servers (BIG-IP DNS or other vendors). When you modify the DNS profile to insert an ECS record. You will observe that the original /32 address will be forwarded to any DNS servers that are in the pool for that particular Virtual Server. The following is a diagram of the above.10KViews2likes27CommentsF5 DNS Enhancements for DSC in BIG-IP v13

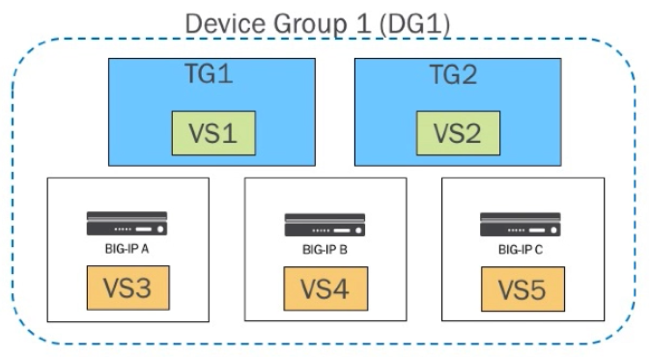

Prior to v13, F5 DNS assumes that all devices in a cluster have knowledge about all virtual servers, which makes virtual server auto-discovery not function properly. In this article, we’ll cover the changes to the F5 DNS server object introduced in v13 to solve this problem. In the scenario below, we have 3 BIG-IPs in a device group. In that device group we have two traffic groups each serving a single floating virtual server, and then each BIG-IP has a non-floating virtual server. Let’s look at the behavior prior to v13.When F5 DNS receives a get config message from BIG-IP A, it discovers the virtual servers it knows about, the two failover objects (vs1 & vs2) and the non-floating object (vs3.) All is well at this point, but then the problem should become obvious when we look at the status when F5 DNS receives a get config message from BIG-IP B. Now that F5 DNS has received an update from BIG-IP B, it discovers vs1, vs2, and vs4, but doesn’t know about vs3, and thus removes it. This leads to flapping of these non-floating objects as more get config messages from the various BIG-IPs in the device group are received. There are a couple workarounds: Disable auto-discovery Configure the BIG-IPs as standalone server objects - this will result in all three BIG-IPs (A, B, & C) having vs1 and vs2, but they can be used as you normally would without concern v13 Changes The surface changes all center on the server object. Previously, you would add a BIG-IP System (Single) or (Redundant,) but those types are merged in v13 to just a BIG-IP System. Note that you when you add the “device” to the server object, you are adding the appropriate self-IP from each BIG-IP device in the cluster, so the device is really a cluster of devices. You can see that the cluster of devices is treated as one by F5 DNS: If you recall the original problem statement, we don’t want F5 DNS to remove non-floating virtuals from the configuration as it receives messages from BIG-IPs unaware of other BIG-IP objects. In v13, virtual servers are tracked by server and device. A virtual server will only be removed if it was removed from all devices that had knowledge of it. So we’ve seen the GUI, what does it look like under the hood? Here’s the tmsh output: gtm server gslb_server { addresses { 10.10.10.11 { device-name DG1 } 10.10.10.12 { device-name DG1 } 10.10.10.13 { device-name DG1 } } datacenter dc1 devices { DG1 { addresses { 10.10.10.11 { } 10.10.10.12 { } 10.10.10.13 { } } } } monitor bigip product bigip } Note the repetition there? The old schema is in red, and the new schema in blue. Also note that if you execute the tmsh list gtm server <name> addresses command, you will get an api-status-warning that that property has been deprecated, and will likely be removed in future versions. One final note: if you grow or shrink your cluster, you will need to manually update the F5 DNS server object device to reflect that by adding/subtracting the appropriate self-IP addresses. (Much thanks to Phil Cooper for the excellent source material)416Views0likes1CommentAPM Cookbook: Monitoring APM

I have a customer who recently needed to monitor the APM logon page but noticed the default HTTP monitor was eating up APM access sessions. To address this issue we need the HTTP monitor to retain the MRHSession cookie as it follows the redirect from / to /my.policy. After the monitor determines the health of the APM logon page it needs to also access the logout page to delete it's current access session. To accomplish this we can use the cookie jar option in curl: -c create the cookie jar -b uses an existing cookie jar We also tell curl to follow redirects with the -L argument. In the example code below the monitor is looking for the F5 licensing statement at the bottom of the APM logon page. If you've customized the APM logon page this monitor can easily be modified to fit your specific requirements. #!/bin/sh # # (c) Copyright 1996-2014 F5 Networks, Inc. # # This software is confidential and may contain trade secrets that are the # property of F5 Networks, Inc. No part of the software may be disclosed # to other parties without the express written consent of F5 Networks, Inc. # It is against the law to copy the software. No part of the software may # be reproduced, transmitted, or distributed in any form or by any means, # electronic or mechanical, including photocopying, recording, or information # storage and retrieval systems, for any purpose without the express written # permission of F5 Networks, Inc. Our services are only available for legal # users of the program, for instance in the event that we extend our services # by offering the updating of files via the Internet. # # @(#) $Id: http_monitor_cURL+GET,v 1.0 2007/06/28 16:10:15 deb Exp $ # (based on sample_monitor,v 1.3 2005/02/04 18:47:17 saxon) # # these arguments supplied automatically for all external monitors: # $1 = IP (IPv6 notation. IPv4 addresses are passed in the form # ::ffff:w.x.y.z # where "w.x.y.z" is the IPv4 address) # $2 = port (decimal, host byte order) # # remove IPv6/IPv4 compatibility prefix (LTM passes addresses in IPv6 format) IP=`echo ${1} | sed 's/::ffff://'` PORT=${2} PIDFILE="/var/run/`basename ${0}`.${IP}_${PORT}.pid" # kill of the last instance of this monitor if hung and log current pid if [ -f $PIDFILE ] then echo "EAV exceeded runtime needed to kill ${IP}:${PORT}" | logger -p local0.error kill -9 `cat $PIDFILE` > /dev/null 2>&1 fi echo "$$" > $PIDFILE # send request & check for expected response curl -Lsk https://${IP}:${PORT} -c cookiejar.txt | grep "This product is licensed from F5 Networks" > /dev/null # mark node UP if expected response was received if [ $? -eq 0 ] then rm -f $PIDFILE echo "UP" else rm -f $PIDFILE fi # delete APM session curl -Lsk https://${IP}:${PORT}/my.logout.php3 -b cookiejar.txt > /dev/null exit You can save this monitor to your F5 by following SOL13423 and for information on implementing external monitors click here.419Views0likes1CommentDNS::question name - Modifying a DNS Suffix When Your Windows Client Appends It During Recursive Lookups

Quite some time ago I was asked about a 2-second delay when performing recursive lookups against the BIG-IP. My first thought was that there was an issue with transport. I then decided to deploy it in my own lab. To my surprise, I was experiencing the exact same issue though I know there were no transport problems in my own environment. So to give a little more color to the use case at hand, my Windows domain controller is performing recursive lookups against my BIG-IP. My BIG-IP is configured as a recursive DNS server using a transparent cache. This simply means that I am forwarding the request to another upstream DNS server and caching its response. Pretty simple right? Well, there is a little more to it as we will get into. My next step was to look at the DNS query to see what it looked like at the BIG-IP. After a quick search on DevCentral for an iRule to log it, I applied it to my DNS listener and tailed the log. when DNS_REQUEST { log local0. "my question name: [DNS::question name]" } Interesting enough I found that the DNS query from the DC was actually appending the DNS suffix using its actual domain name. Then I also ran a tcpdump from the BIG-IP to see the actual request which indeed confirmed the results of my logs. I then logged into my DC to identify the network connection settings. Everything looked OK but I am of course no expert at Windows network settings. As far as I know, this is how every DC is configured by default as I have not modified any of these settings. Now I am stuck and pretty sure the only way to modify the query is to go to the magical language of Tcl. I admit I am no expert so I did some DevCentral searches and reached out to my team who provided some great feedback. In the end, I applied the following iRule and DNS resolution is occurring without the appended DNS suffix. Hope this helps! when DNS_REQUEST { if { [DNS::question name] ends_with "demo.lab" } { set queryName [string trimright {“.demo.lab”} [DNS::question name] ] log local0. "My new question name: $queryName" DNS::return } }783Views0likes4CommentsWhat Is BIG-IP?

tl;dr - BIG-IP is a collection of hardware platforms and software solutions providing services focused on security, reliability, and performance. F5's BIG-IP is a family of products covering software and hardware designed around application availability, access control, and security solutions. That's right, the BIG-IP name is interchangeable between F5's software and hardware application delivery controller and security products. This is different from BIG-IQ, a suite of management and orchestration tools, and F5 Silverline, F5's SaaS platform. When people refer to BIG-IP this can mean a single software module in BIG-IP's software family or it could mean a hardware chassis sitting in your datacenter. This can sometimes cause a lot of confusion when people say they have question about "BIG-IP" but we'll break it down here to reduce the confusion. BIG-IP Software BIG-IP software products are licensed modules that run on top of F5's Traffic Management Operation System® (TMOS). This custom operating system is an event driven operating system designed specifically to inspect network and application traffic and make real-time decisions based on the configurations you provide. The BIG-IP software can run on hardware or can run in virtualized environments. Virtualized systems provide BIG-IP software functionality where hardware implementations are unavailable, including public clouds and various managed infrastructures where rack space is a critical commodity. BIG-IP Primary Software Modules BIG-IP Local Traffic Manager (LTM) - Central to F5's full traffic proxy functionality, LTM provides the platform for creating virtual servers, performance, service, protocol, authentication, and security profiles to define and shape your application traffic. Most other modules in the BIG-IP family use LTM as a foundation for enhanced services. BIG-IP DNS - Formerly Global Traffic Manager, BIG-IP DNS provides similar security and load balancing features that LTM offers but at a global/multi-site scale. BIG-IP DNS offers services to distribute and secure DNS traffic advertising your application namespaces. BIG-IP Access Policy Manager (APM) - Provides federation, SSO, application access policies, and secure web tunneling. Allow granular access to your various applications, virtualized desktop environments, or just go full VPN tunnel. Secure Web Gateway Services (SWG) - Paired with APM, SWG enables access policy control for internet usage. You can allow, block, verify and log traffic with APM's access policies allowing flexibility around your acceptable internet and public web application use. You know.... contractors and interns shouldn't use Facebook but you're not going to be responsible why the CFO can't access their cat pics. BIG-IP Application Security Manager (ASM) - This is F5's web application firewall (WAF) solution. Traditional firewalls and layer 3 protection don't understand the complexities of many web applications. ASM allows you to tailor acceptable and expected application behavior on a per application basis . Zero day, DoS, and click fraud all rely on traditional security device's inability to protect unique application needs; ASM fills the gap between traditional firewall and tailored granular application protection. BIG-IP Advanced Firewall Manager (AFM) - AFM is designed to reduce the hardware and extra hops required when ADC's are paired with traditional firewalls. Operating at L3/L4, AFM helps protect traffic destined for your data center. Paired with ASM, you can implement protection services at L3 - L7 for a full ADC and Security solution in one box or virtual environment. BIG-IP Hardware BIG-IP hardware offers several types of purpose-built custom solutions, all designed in-house by our fantastic engineers; no white boxes here. BIG-IP hardware is offered via series releases, each offering improvements for performance and features determined by customer requirements. These may include increased port capacity, traffic throughput, CPU performance, FPGA feature functionality for hardware-based scalability, and virtualization capabilities. There are two primary variations of BIG-IP hardware, single chassis design, or VIPRION modular designs. Each offer unique advantages for internal and collocated infrastructures. Updates in processor architecture, FPGA, and interface performance gains are common so we recommend referring to F5's hardware pagefor more information.61KViews2likes3CommentsConfiguring Decision Logging for the F5 BIG-IP Global Traffic Manager

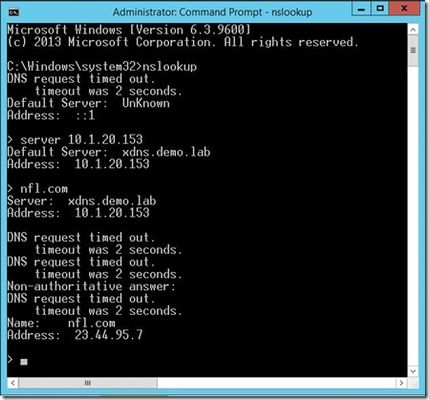

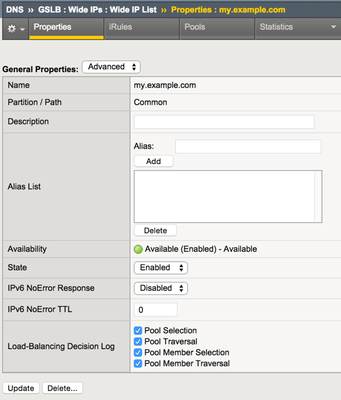

I was working on a GTM solution and with my limited lab I wanted to make sure that the decisions that F5 BIG-IP Global Traffic Manager made at the wideIP and pool level were as evident in the logs as they were consistent in my test results. It turns out there are some fancy little checkboxes in the wideIP configuration that you can check to enable such logs. You might notice, however, that upon enabling these checkboxes the logs are nowhere to be found. This is because there are other necessary steps. You need to configure a few objects to get those logs flowing. Log Publisher The first object is the log publisher. For as much detail as flows in the decision logging, I’d highly recommend using an HSL profile to log to a remote server, but for the purposes of testing I used the local syslog. This can also be done with tmsh. sys log-config publisher gtm_decision_logging { destinations { local-syslog { } } } DNS Logging Profile Next, create a DNS logging profile, make sure to select the Log Publisher you created in the previous step. For testing purpose I enabled the log responses and query ID as well, but those are disabled by default. This also can be created in tmsh. ltm profile dns-logging gtm_decision_logging { enable-response-logging yes include-query-id yes log-publisher gtm_decision_logging } Custom DNS Profile Now create a custom DNS profile. The only custom properties necessary are at the bottom of the profile where you enable logging and select the logging profile. This can also be configured in tmsh. ltm profile dns gtm_decision_logging { app-service none defaults-from dns enable-logging yes log-profile gtm_decision_logging } Apply the DNS Profile Now that all the objects are created, you can reference the DNS profile in the listener. in tmsh, you can modify the listener by adding the profile or if one already exists, replacing it. modify gtm listener gtmlistener profiles replace-all-with { udp_gtm_dns gtm_decision_logging } Log Details Once you have all the objects configured and the DNS profile referenced in your listener, the logging should be hitting /var/log/ltm now. For this first query, the emea pool is selected, but there is no probe data for my primary load balancing method, and the none alternate method skips to the fallback, which uses the configured fallback IP to respond to the client. 2015-06-03 08:54:21 ltm1.dc.test qid 11139 from 192.168.102.1#64536: view none: query: my.example.com IN A + (192.168.102.5%0) 2015-06-03 08:54:21 ltm1.dc.test qid 11139 from 192.168.102.1#64536 [my.example.com A] [round robin selected pool (emea)] [pool member check succeeded (vip3:192.168.103.12) - pool member state is available (green)] [QoS skipped pool member (vip3:192.168.103.12) - path has unmeasured RTT] [pool member check succeeded (vip4:192.168.103.13) - pool member state is available (green)] [QoS skipped pool member (vip4:192.168.103.13) - path has unmeasured RTT] [failed to select pool member by preferred load balancing method] [Using none load balancing method] [failed to select pool member by alternate load balancing method] [selected configured fallback IP] 2015-06-03 08:54:21 ltm1.dc.test qid 11139 to 192.168.102.1#64536: [NOERROR qr,aa,rd] response: my.example.com. 30 IN A 192.168.103.99; In this second request, the emea pool is again selected, but now there is probe data, so the pool member is selected as appropriate. 2015-06-03 08:55:43 ltm1.dc.test qid 6201 from 192.168.102.1#61503: view none: query: my.example.com IN A + (192.168.102.5%0) 2015-06-03 08:55:43 ltm1.dc.test qid 6201 from 192.168.102.1#61503 [my.example.com A] [round robin selected pool (emea)] [pool member check succeeded (vip3:192.168.103.12) - pool member state is available (green)] [QoS selected pool member (vip3:192.168.103.12) - QoS score (2082756232) is higher] [pool member check succeeded (vip4:192.168.103.13) - pool member state is available (green)] [QoS skipped pool member (vip4:192.168.103.13) from two pool members with equal scores] [QoS selected pool member (vip3:192.168.103.12)] 2015-06-03 08:55:43 ltm1.dc.test qid 6201 to 192.168.102.1#61503: [NOERROR qr,aa,rd] response: my.example.com. 30 IN A 192.168.103.12; In this final request, the americas pool is selected, but there is no valid topology score for the pool members, so query is refused. 2015-06-03 08:55:53 ltm1.dc.test qid 23580 from 192.168.102.1#59437: view none: query: my.example.com IN A + (192.168.102.5%0) 2015-06-03 08:55:53 ltm1.dc.test qid 23580 from 192.168.102.1#59437 [my.example.com A] [round robin selected pool (americas)] [pool member check succeeded (vip1:192.168.103.10) - pool member state is available (green)] [QoS selected pool member (vip1:192.168.103.10) - QoS score (0) is higher] [pool member check succeeded (vip2:192.168.103.11) - pool member state is available (green)] [QoS skipped pool member (vip2:192.168.103.11) from two pool members with equal scores] [QoS selected pool member (vip1:192.168.103.10)] [topology load balancing method failed to select pool member (vip1:192.168.103.10) - topology score is 0] [failed to select pool member by preferred load balancing method] [selected configured option Return To DNS] 2015-06-03 08:55:53 ltm1.dc.test qid 23580 to 192.168.102.1#59437: [REFUSED qr,rd] response: empty Yeah, yeah, skip all that and give me the good stuff If you want to test it quickly, you can save the config below to a file (/var/tmp/gtmlogging.txt in this example) and then merge it in. Finally, modify the wideIP and listener and you’re good to go! ### ### configuration: /var/tmp/gtmlogging.txt ### sys log-config publisher gtm_decision_logging { destinations { local-syslog { } } } ltm profile dns-logging gtm_decision_logging { enable-response-logging yes include-query-id yes log-publisher gtm_decision_logging } ltm profile dns gtm_decision_logging { app-service none defaults-from dns enable-logging yes log-profile gtm_decision_logging } ### ### Merge Command ### tmsh load sys config merge file /var/tmp/gtmlogging.txt ### ### Modify wideIP and Listener ### tmsh modify gtm wideip my.example.com load-balancing-decision-log-verbosity { pool-member-selection pool-member-traversal pool-selection pool-traversal } tmsh modify gtm listener gtmlistener profiles replace-all-with { udp_gtm_dns gtm_decision_logging } tmsh save sys config1.8KViews1like3CommentsConfiguring ExternalDNS for Kubernetes with F5 CIS, LTM and DNS

Part of the internet plumbing that allows clients to easily reach applications is DNS. DNS will map a hostname to the IP address of an application. DNS is often configured by hand, staticly. However, when applications are dynamically changing, such as in the case of a Kubernetes environment, we need DNS to keep up without human intervention. Luckily, F5 BIG-IP customers have the pieces required to not only take care of ingress into dynamic resources in Kubernetes using F5 Container Ingress Service (CIS) and Local Traffic Manager (LTM), but the DNS can be taken care of as well with the F5 DNS module. Using ExternalDNS, administrators can control the DNS records dynamically using Kubernetes Custom Resource Definitions (CRDs). F5 Product Management Engineer, Mark Dittmer, provides how-to videos and example Github repos on using this feature. ExternalDNS for Kubernetes using F5 CIS with BIG-IP LTM and DNS Github Repo: https://github.com/mdditt2000/k8s-bigip-ctlr/tree/main/user_guides/externaldns/single-cluster ExternalDNS for Multi-Site Kubernetes using F5 CIS with BIG-IP LTM and DNS Github Repo: https://github.com/mdditt2000/k8s-bigip-ctlr/tree/main/user_guides/externaldns/multi-cluster2.4KViews1like0Comments