APM Cookbook: Single Sign On (SSO) using Kerberos

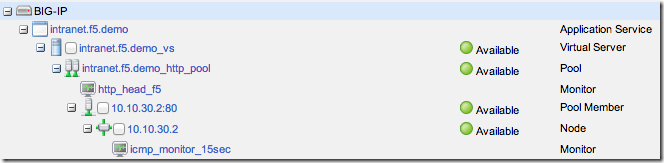

To get the APM Cookbook series moving along, I’ve decided to help out by documenting the common APM solutions I help customers and partners with on a regular basis. Kerberos SSO is nothing new, but seems to stump people who have never used Kerberos before. Getting Kerberos SSO to work with APM is straight forward once you have the Active Directory components configured. Overview I have a pre-configured web service (IIS 7.5/Sharepoint 2010) that is configured for Windows Authentication, which will send a “Negotiate” in the header of the “401 Request for Authorization”. Make sure the web service is configured to send the correct header before starting the APM configuration by accessing the website directly and viewing the headers using browser tools. In my example, I used the Sharepoint 2010/2013 iApp to build the LTM configuration. I’m using a single pool member, sp1.f5.demo (10.10.30.2) listening on HTTP and the Virtual Server listening on HTTPS performing SSL offload. Step 1 - Create a delegation account on your domain 1.1 Open Active Directory Users and Computers administrative tool and create a new user account. User logon name: host/apm-kcd.f5.demo User logon name (pre-Windows 2000): apm-kcd Set the password and not expire 1.2 Alter the account and set the servicePrincipcalName. Run setspn from the command line: setspn –A host/apm-kcd.f5.demo apm-kcd A delegation tab will now be available for this user. Step 2 - Configure the SPN 2.1 Open Active Directory Users and Computers administrative tool and select the user account created in the previous step. Edit the Properties for this user Select the Delegation tab Select: Trust this user for delegation to specified services only Select: Use any authentication protocol Select Add, to add services. Select Users or Computers… Enter the host name, in my example I will be adding HTTP service for sp1.f5.demo (SP1). Select Check Names and OK Select the http Service Type and OK 2.2 Make sure there are no duplicate SPNs and run setspn –x from the command line. Step 3 - Check Forward and Reverse DNS DNS is critical and a missing PTR is common error I find when troubleshooting Kerberos SSO problems. From the BIG-IP command line test forward and reverse records exist for the web service using dig: # dig sp1.f5.demo ;; QUESTION SECTION: ;sp1.f5.demo. IN A ;; ANSWER SECTION: sp1.f5.demo. 1200 IN A 10.10.30.2 # dig -x 10.10.30.2 ;; QUESTION SECTION: ;2.30.10.10.in-addr.arpa. IN PTR ;; ANSWER SECTION: 2.30.10.10.in-addr.arpa. 1200 IN PTR sp1.f5.demo. Step 4 - Create the APM Configuration In this example I will use a Logon Page to capture the user credentials that will be authenticated against Active Directory and mapped to the SSO variables for the Kerberos SSO. 4.1 Configure AAA Server for Authentication Access Policy >> AAA Servers >> Active Directory >> “Create” Supply the following: Name: f5.demo_ad_aaa Domain Name: f5.demo Domain Controller: (Optional – BIG-IP will use DNS to discover if left blank) Admin Name and Password Select “Finished" to save. 4.2 Configure Kerberos SSO Access Policy >> SSO Configurations >> Kerberos >> “Create” Supply the following: Name: f5.demo_kerberos_sso Username Source: session.sso.token.last.username User Realm Source: session.ad.last.actualdomain Kerberos Realm: F5.DEMO Account Name: apm-kcd (from Step 1) Account Password & Confirm Account Password (from Step1) Select “Finished” to save. 4.3 Create an Access Profile and Policy We can now bring it all together using the Visual Policy Editor (VPE). Access Policy >> Access Profiles >> Access Profile List >> “Create” Supply the following: Name: intranet.f5.demo_sso_ap SSO Configuration: f5.demo_kerberos_sso Languages: English (en) Use the default settings for all other settings. Select “Finished” to save. 4.4 Edit the Access Policy in the VPE Access Policy >> Access Profiles >> Access Profile List >> “Edit” (intranet.f5.demo_sso_ap) On the fallback branch after the Start object, add a Logon Page object. Leave the defaults and “Save”. On the fallback branch after the Logon Page object, add an AD Auth object. Select the Server Select “Save” when your done. On the Successful branch after the AD Auth object, add a SSO Credential Mapping object. Leave the defaults and “Save”. On the fallback branch after the SSO Credential Mapping, change Deny ending to Allow. The finished policy should look similar to this: Don't forget to “Apply Access Policy”. Step 5 – Attach the APM Policy to the Virtual Server and Test 5.1 Edit the Virtual Server Local Traffic >> Virtual Servers >> Virtual Server List >> intranet.f5.demo_vs Scroll down to the Access Policy section and select the Access Profile. Select “Update” to save. 5.2 Test Open a browser, access the Virtual Server URL (https://intranet.f5.demo in my example), authenticate and verify the client is automatically logged on (SSO) to the web service. To verify Kerberos SSO has worked correctly, check /var/log/apm on APM by turning on debug. You should see log events similar to the ones below when the BIG-IP has fetched a Kerberos Ticket. info websso.1[9041]: 014d0011:6: 33186a8c: Websso Kerberos authentication for user 'test.user' using config '/Common/f5.demo_kerberos_sso' debug websso.1[9041]: 014d0018:7: sid:33186a8c ctx:0x917e4a0 server address = ::ffff:10.10.30.2 debug websso.1[9041]: 014d0021:7: sid:33186a8c ctx:0x917e4a0 SPN = HTTP/sp1.f5.demo@F5.DEMO debug websso.1[9041]: 014d0023:7: S4U ======> ctx: 33186a8c, sid: 0x917e4a0, user: test.user@F5.DEMO, SPN: HTTP/sp1.f5.demo@F5.DEMO debug websso.1[9041]: 014d0001:7: Getting UCC:test.user@F5.DEMO@F5.DEMO, lifetime:36000 debug websso.1[9041]: 014d0001:7: fetched new TGT, total active TGTs:1 debug websso.1[9041]: 014d0001:7: TGT: client=apm-kcd@F5.DEMO server=krbtgt/F5.DEMO@F5.DEMO expiration=Tue Apr 29 08:33:42 2014 flags=40600000 debug websso.1[9041]: 014d0001:7: TGT expires:1398724422 CC count:0 debug websso.1[9041]: 014d0001:7: Initialized UCC:test.user@F5.DEMO@F5.DEMO, lifetime:36000 kcc:0x92601e8 debug websso.1[9041]: 014d0001:7: UCCmap.size = 1, UCClist.size = 1 debug websso.1[9041]: 014d0001:7: S4U ======> - NO cached S4U2Proxy ticket for user: test.user@F5.DEMO server: HTTP/sp1.f5.demo@F5.DEMO - trying to fetch debug websso.1[9041]: 014d0001:7: S4U ======> - NO cached S4U2Self ticket for user: test.user@F5.DEMO - trying to fetch debug websso.1[9041]: 014d0001:7: S4U ======> - fetched S4U2Self ticket for user: test.user@F5.DEMO debug websso.1[9041]: 014d0001:7: S4U ======> trying to fetch S4U2Proxy ticket for user: test.user@F5.DEMO server: HTTP/sp1.f5.demo@F5.DEMO debug websso.1[9041]: 014d0001:7: S4U ======> fetched S4U2Proxy ticket for user: test.user@F5.DEMO server: HTTP/sp1.f5.demo@F5.DEMO debug websso.1[9041]: 014d0001:7: S4U ======> OK! Conclusion Like I said in the beginning, once you know how Kerberos SSO works with APM, it’s a piece of cake!7.9KViews1like28CommentsCan SSL VPN client handle multiple simultaneous sessions?

From a single Windows machine, we have a need to have the F5 SSL VPN client connect both to multiple external organizations at once, and also to connect to single organizations by multiple tunnels, with separate credentials. If there's a way to do either of these, it's not obvious to us. It seems like only one SSL VPN client instance can run per machine, and that instance can only handle a single tunnel, with a single set of credentials, to a single remote location. It's testament to F5's market penetration that we find ourselves needing to do more than that. Is there a way? Thanks, Whit663Views0likes4CommentsLDAPS Monitor with Certificate Expiration

Hi Team, I have been working with my AD team trying to resolve a problem where they forget to update a Domain Controller certificate and it expires and ADLDAPS queries fail since they dont bind to expired certificates. They have requested to see if we can drop a member out of the pool if the certificate is expired ( ie, not a valid SSL cert ) I have been messing with the LDAP Health monitor, turning on the Security settings, but I dont believe this would actually check that a certificate is valid or not. I know with server side SSL configuration you can enable SSL authentication but would just stop traffic from flow, not actually drop a member out of the pool. Any ideas ?643Views0likes4CommentsProblems load balancing printing

Followed this guide to configure load balancing MS printing with npath routing: http://blog.loadbalancer.org/load-balancing-microsoft-print-server/ The problem is when I try to connect to the printer with the FQDN of virtual server (eg. \\virtualserver.mydomain.com) I get the error "Operation could not be completed (error 0x00000709). Double check the printer name and make sure that the printer is connected to the network.". If I connect to the VIP (eg. \\192.168.0.10) it works fine. If I connect to the host directly (by hostname or IP) it works fine. Any ideas?1.6KViews0likes3CommentsOutlook Client Prompting for Password

A few months ago we implemented Exchange 2010 with the help of our LTMs. However it has come to light that people have been complaining about how sometimes they are being prompted to log in after they've been logged in all day. What they don't understand is that when they switch between networks "Wired to wireless" or vice versa, their IP address changes so the CAS server they land on is likely different, prompting them to re-authenticate. I don't suppose there is an F5 solution to stop these password prompt. The best solution I came up with was to run Outlook anywhere and do the persistence based on cookies. Are there any other ideas out there?624Views0likes7Comments5 Years Later: OpenAJAX Who?

Five years ago the OpenAjax Alliance was founded with the intention of providing interoperability between what was quickly becoming a morass of AJAX-based libraries and APIs. Where is it today, and why has it failed to achieve more prominence? I stumbled recently over a nearly five year old article I wrote in 2006 for Network Computing on the OpenAjax initiative. Remember, AJAX and Web 2.0 were just coming of age then, and mentions of Web 2.0 or AJAX were much like that of “cloud” today. You couldn’t turn around without hearing someone promoting their solution by associating with Web 2.0 or AJAX. After reading the opening paragraph I remembered clearly writing the article and being skeptical, even then, of what impact such an alliance would have on the industry. Being a developer by trade I’m well aware of how impactful “standards” and “specifications” really are in the real world, but the problem – interoperability across a growing field of JavaScript libraries – seemed at the time real and imminent, so there was a need for someone to address it before it completely got out of hand. With the OpenAjax Alliance comes the possibility for a unified language, as well as a set of APIs, on which developers could easily implement dynamic Web applications. A unified toolkit would offer consistency in a market that has myriad Ajax-based technologies in play, providing the enterprise with a broader pool of developers able to offer long term support for applications and a stable base on which to build applications. As is the case with many fledgling technologies, one toolkit will become the standard—whether through a standards body or by de facto adoption—and Dojo is one of the favored entrants in the race to become that standard. -- AJAX-based Dojo Toolkit , Network Computing, Oct 2006 The goal was simple: interoperability. The way in which the alliance went about achieving that goal, however, may have something to do with its lackluster performance lo these past five years and its descent into obscurity. 5 YEAR ACCOMPLISHMENTS of the OPENAJAX ALLIANCE The OpenAjax Alliance members have not been idle. They have published several very complete and well-defined specifications including one “industry standard”: OpenAjax Metadata. OpenAjax Hub The OpenAjax Hub is a set of standard JavaScript functionality defined by the OpenAjax Alliance that addresses key interoperability and security issues that arise when multiple Ajax libraries and/or components are used within the same web page. (OpenAjax Hub 2.0 Specification) OpenAjax Metadata OpenAjax Metadata represents a set of industry-standard metadata defined by the OpenAjax Alliance that enhances interoperability across Ajax toolkits and Ajax products (OpenAjax Metadata 1.0 Specification) OpenAjax Metadata defines Ajax industry standards for an XML format that describes the JavaScript APIs and widgets found within Ajax toolkits. (OpenAjax Alliance Recent News) It is interesting to see the calling out of XML as the format of choice on the OpenAjax Metadata (OAM) specification given the recent rise to ascendancy of JSON as the preferred format for developers for APIs. Granted, when the alliance was formed XML was all the rage and it was believed it would be the dominant format for quite some time given the popularity of similar technological models such as SOA, but still – the reliance on XML while the plurality of developers race to JSON may provide some insight on why OpenAjax has received very little notice since its inception. Ignoring the XML factor (which undoubtedly is a fairly impactful one) there is still the matter of how the alliance chose to address run-time interoperability with OpenAjax Hub (OAH) – a hub. A publish-subscribe hub, to be more precise, in which OAH mediates for various toolkits on the same page. Don summed it up nicely during a discussion on the topic: it’s page-level integration. This is a very different approach to the problem than it first appeared the alliance would take. The article on the alliance and its intended purpose five years ago clearly indicate where I thought this was going – and where it should go: an industry standard model and/or set of APIs to which other toolkit developers would design and write such that the interface (the method calls) would be unified across all toolkits while the implementation would remain whatever the toolkit designers desired. I was clearly under the influence of SOA and its decouple everything premise. Come to think of it, I still am, because interoperability assumes such a model – always has, likely always will. Even in the network, at the IP layer, we have standardized interfaces with vendor implementation being decoupled and completely different at the code base. An Ethernet header is always in a specified format, and it is that standardized interface that makes the Net go over, under, around and through the various routers and switches and components that make up the Internets with alacrity. Routing problems today are caused by human error in configuration or failure – never incompatibility in form or function. Neither specification has really taken that direction. OAM – as previously noted – standardizes on XML and is primarily used to describe APIs and components - it isn’t an API or model itself. The Alliance wiki describes the specification: “The primary target consumers of OpenAjax Metadata 1.0 are software products, particularly Web page developer tools targeting Ajax developers.” Very few software products have implemented support for OAM. IBM, a key player in the Alliance, leverages the OpenAjax Hub for secure mashup development and also implements OAM in several of its products, including Rational Application Developer (RAD) and IBM Mashup Center. Eclipse also includes support for OAM, as does Adobe Dreamweaver CS4. The IDE working group has developed an open source set of tools based on OAM, but what appears to be missing is adoption of OAM by producers of favored toolkits such as jQuery, Prototype and MooTools. Doing so would certainly make development of AJAX-based applications within development environments much simpler and more consistent, but it does not appear to gaining widespread support or mindshare despite IBM’s efforts. The focus of the OpenAjax interoperability efforts appears to be on a hub / integration method of interoperability, one that is certainly not in line with reality. While certainly developers may at times combine JavaScript libraries to build the rich, interactive interfaces demanded by consumers of a Web 2.0 application, this is the exception and not the rule and the pub/sub basis of OpenAjax which implements a secondary event-driven framework seems overkill. Conflicts between libraries, performance issues with load-times dragged down by the inclusion of multiple files and simplicity tend to drive developers to a single library when possible (which is most of the time). It appears, simply, that the OpenAJAX Alliance – driven perhaps by active members for whom solutions providing integration and hub-based interoperability is typical (IBM, BEA (now Oracle), Microsoft and other enterprise heavyweights – has chosen a target in another field; one on which developers today are just not playing. It appears OpenAjax tried to bring an enterprise application integration (EAI) solution to a problem that didn’t – and likely won’t ever – exist. So it’s no surprise to discover that references to and activity from OpenAjax are nearly zero since 2009. Given the statistics showing the rise of JQuery – both as a percentage of site usage and developer usage – to the top of the JavaScript library heap, it appears that at least the prediction that “one toolkit will become the standard—whether through a standards body or by de facto adoption” was accurate. Of course, since that’s always the way it works in technology, it was kind of a sure bet, wasn’t it? WHY INFRASTRUCTURE SERVICE PROVIDERS and VENDORS CARE ABOUT DEVELOPER STANDARDS You might notice in the list of members of the OpenAJAX alliance several infrastructure vendors. Folks who produce application delivery controllers, switches and routers and security-focused solutions. This is not uncommon nor should it seem odd to the casual observer. All data flows, ultimately, through the network and thus, every component that might need to act in some way upon that data needs to be aware of and knowledgeable regarding the methods used by developers to perform such data exchanges. In the age of hyper-scalability and über security, it behooves infrastructure vendors – and increasingly cloud computing providers that offer infrastructure services – to be very aware of the methods and toolkits being used by developers to build applications. Applying security policies to JSON-encoded data, for example, requires very different techniques and skills than would be the case for XML-formatted data. AJAX-based applications, a.k.a. Web 2.0, requires different scalability patterns to achieve maximum performance and utilization of resources than is the case for traditional form-based, HTML applications. The type of content as well as the usage patterns for applications can dramatically impact the application delivery policies necessary to achieve operational and business objectives for that application. As developers standardize through selection and implementation of toolkits, vendors and providers can then begin to focus solutions specifically for those choices. Templates and policies geared toward optimizing and accelerating JQuery, for example, is possible and probable. Being able to provide pre-developed and tested security profiles specifically for JQuery, for example, reduces the time to deploy such applications in a production environment by eliminating the test and tweak cycle that occurs when applications are tossed over the wall to operations by developers. For example, the jQuery.ajax() documentation states: By default, Ajax requests are sent using the GET HTTP method. If the POST method is required, the method can be specified by setting a value for the type option. This option affects how the contents of the data option are sent to the server. POST data will always be transmitted to the server using UTF-8 charset, per the W3C XMLHTTPRequest standard. The data option can contain either a query string of the form key1=value1&key2=value2 , or a map of the form {key1: 'value1', key2: 'value2'} . If the latter form is used, the data is converted into a query string using jQuery.param() before it is sent. This processing can be circumvented by setting processData to false . The processing might be undesirable if you wish to send an XML object to the server; in this case, change the contentType option from application/x-www-form-urlencoded to a more appropriate MIME type. Web application firewalls that may be configured to detect exploitation of such data – attempts at SQL injection, for example – must be able to parse this data in order to make a determination regarding the legitimacy of the input. Similarly, application delivery controllers and load balancing services configured to perform application layer switching based on data values or submission URI will also need to be able to parse and act upon that data. That requires an understanding of how jQuery formats its data and what to expect, such that it can be parsed, interpreted and processed. By understanding jQuery – and other developer toolkits and standards used to exchange data – infrastructure service providers and vendors can more readily provide security and delivery policies tailored to those formats natively, which greatly reduces the impact of intermediate processing on performance while ensuring the secure, healthy delivery of applications. API Jabberwocky: You Say Tomay-to and I Say Potah-to OpenAjax Metadata 1.0 and the Adobe Dreamweaver Widget Browser OpenAjax Alliance AJAX-based Dojo Toolkit The Stealthy Ascendancy of JSON JSON Continues its Winning Streak Over XML JSON versus XML: Your Choice Matters More Than You Think I am in your HTTP headers, attacking your application The Web 2.0 API: From collaborating to compromised IT as a Service: A Stateless Infrastructure Architecture Model JSON Activity Streams and the Other Consumerization of IT350Views0likes0CommentsSharepoint 2010 Health Monitor

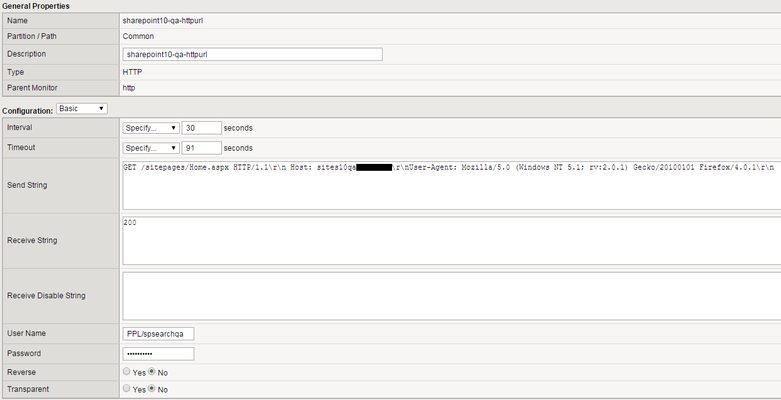

I have an HTTP GET health monitor setup for our Sharepoint 2010 servers. The health montior seems to work as I am seeing 200s come back from the server after authentication. However, what I'm also seeing is the health monitor sending along several GETs without the NTLM credentials and those come back with 401 authentication errors: Logs from Sharepoint server...top two are not successful as the LTM did not send along the credentials of PPL\spsearchqa. Bottom two are successful with the creds: 2015-04-24 13:48:04 xxx.xxx.xxx.xxx GET /sitepages/Home.aspx - 80 - xxx.xxx.xxx.xxx Mozilla/5.0+(Windows+NT+5.1;+rv:2.0.1)+Gecko/20100101+Firefox/4.0.1 401 2 5 5 2015-04-24 13:48:04 xxx.xxx.xxx.xxx GET /sitepages/Home.aspx - 80 - xxx.xxx.xxx.xxx Mozilla/5.0+(Windows+NT+5.1;+rv:2.0.1)+Gecko/20100101+Firefox/4.0.1 401 1 2148074254 5 2015-04-24 13:48:08 xxx.xxx.xxx.xxx GET /sitepages/Home.aspx - 80 PPL\spsearchqa xxx.xxx.xxx.xxx Mozilla/5.0+(Windows+NT+5.1;+rv:2.0.1)+Gecko/20100101+Firefox/4.0.1 200 0 64 12045 2015-04-24 13:48:14 xxx.xxx.xxx.xxx GET /sitepages/Home.aspx - 80 PPL\spsearchqa xxx.xxx.xxx.xxx Mozilla/5.0+(Windows+NT+5.1;+rv:2.0.1)+Gecko/20100101+Firefox/4.0.1 200 0 64 10075 Here is how my health monitor is setup: Any help would be very much appreciated. Thank you!250Views0likes3CommentsOffice 365 with APM as IdP (no ADFS), troubleshooting

hello, I have starting a non-hybrid deployment of Office365 with DirSync (sync is working). My domain is a subdomain in a forest. I followed the F5 deployment guide (manual config, no iApp) and have the office365 portal redirection to my IdP (APM 11.6 HF5) and the IdP redirection with assertion (which seems correct) to the Office 365 portal. But signon doesn't work and I get an error 80043431. Questions: cannot find Microsoft troubleshooting guides that do consider a deployment without ADFS. I would like to verify the SSO configuration of Office365 but the PS command Get-MsolFederationProperty -DomainName seem to work only with ADFS... get an error Get-MsolFederationProperty : Failed to connect to Active Directory Federation Services 2.0 on the local machine. Please try running Set-MsolADFSContext before running this command again. Does anyone knows a way to get the SSO configuration in a deployment without ADFS? has anyone gone through the same error and found the solution? Thanks Alex340Views0likes2CommentsAPM : Radius and AD same logon page fail

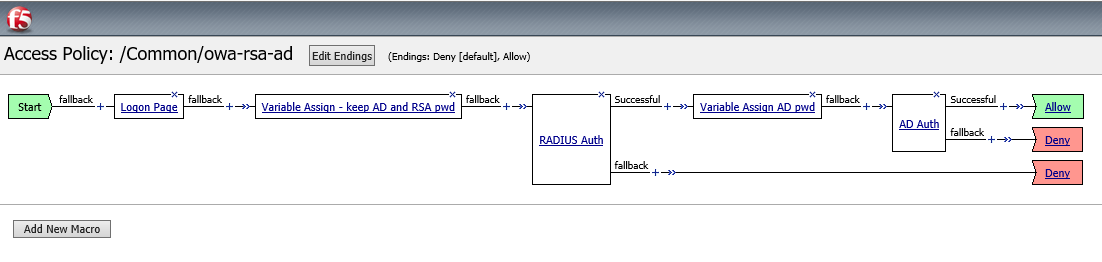

Hi, based on the following article : https://devcentral.f5.com/questions/bigip-apm-ad-rsa-auth I'm trying to implement a single logon page with these 2 Authentication mode : "Radius" and "AD" (same login for both but not the same password) : Bellow a screenshot of my current VPE applied to my VS (OWA 2010) : Variable Assign - keep AD and RSA pwd : session.logon.last.password = Session Variable session.logon.last.token (unsecure) session.logon.temp.password = Session Variable session.logon.last.password (unsecure) Variable Assign AD pwd : session.logon.last.password = Session Variable session.logon.temp.password (unsecure) Unfortunately I always have the following errors message in my APM report : * RADIUS module: authentication with 'username' failed: Access-Reject packet from host IP-of-my-radius-server * RADIUS module: parseResponse():Access-Reject packet from host IP-of-my-radius-server:port Please help me !!!240Views0likes1CommentLayer 4 redirect any help appreciated

Hey everyone, looking for some assistance in creating an iRule to use for ADFS 2012 R2. Since it no longer relies on IIS you need to create an L4 VS on the F5 LTM. Any help would be greatly appreciated!! This is what I am looking to accomplish: redirect from http to https append text to uri. Example: users type in: http://site.domain.com It is redirected to: https://site.domain.com/misc/anything.any231Views0likes2Comments