Infrastructure as Code: Automating F5 Distributed Cloud CEs with Ansible

Introduction Welcome to the first installment of our Infrastructure as Code (IaC) series, focusing on F5 products and Ansible. This series has been a long-standing desire of mine to showcase the ability of IaC utilizing Ansible Automation Platform to deliver Day 0 through Day 2 operations with multiple F5 virtualized platforms. Over time, I've encountered numerous financial clients expressing interest in this topic. For many of these clients, the prospect of leveraging IaC to redeploy an environment outweighs the traditional approach of performing upgrades. This series will hopefully provide insight, documentation, and code for anyone embarking on this journey. Why Ansible Automation Platform? Like most people, I started my journey with community editions of Ansible. As my coding became more complex, so did the need to ensure that my lab infrastructure adhered to the best security guidelines required by my company (my goal being to mimic how customers would/should do things in real life). I began utilizing Ansible Automation Platform to ensure my credentials were protected, as well as to organize and share my code with the rest of my team (following the 'just in case you got hit by a bus' theory). Ansible Automation Platform utilizes execution environments (EE) to ensure code runs efficiently and cleanly every time. Now, I am also creating Execution Environments via GitHub with workflows and pushing them up to Quay.io (https://github.com/VDI-Tech-Guy/f5-execution-engines). Huge thanks to Colin McNaughton at Red Hat for making my life so much easier with building EEs! Why deploy F5 Distributed Cloud on VMware vSphere? As I mentioned before, I had this desire to build this Infrastructure as Code (IaC) code a while back. This was prior to the Broadcom acquisition of VMware. Being an ex-VMware employee, I had a lot of knowledge of virtualization platform infrastructure going into this project, and I started my focus on deploying on VMware vSphere. F5 Distributed Cloud can be deployed in any cloud, anywhere. However, I really wanted to focus on on-premises deployments because not every customer can afford the cloud. Moreover, there's always a back-and-forth battle between on-premises and the cloud, which has evolved into the Hybrid Cloud and the Multi-Cloud. I do intend to extend this series to the Multi-Cloud, but these initial deployments will be focused on VMware vSphere, as it is still utilized in many organizations across the globe. Information about the Setup in the Demo Video If you watch the video (down below) on how the deployment works, you can see i did a bunch of the pre-work prior to launching the deployment, in the git repostory (link in Resources). Here are some Prework items i did Had a fully functional Ansible Automation Platform 2.4+ enviornment setup and working. (at the time the controller version was 4.4.4) Execution Environment was imported into Ansible Automation Platform Controller The Project was setup to import the Playbooks from the Git Repository (In Resources Section below) and setup the Default Execution Environment Demo Inventory was setup (in our usecase we only needed the vCenter Host) We Setup Network Credentials for the vCenter The Template was setup and had Variables populated in it (Note the API Key was hidden). As mentioned in the Video (Below) The variables were populated to my environment, this contains all the information, i have provided a Demo Example in the git repository for anyone to mimic my settings to their environment, also the example has comments about each field or area of a field and the purpose of the variable. { "rhel_location": "https://vesio.blob.core.windows.net/releases/rhel/9/x86_64/images/vmware/rhel-9.2023.29-20231212012955-single-nic.ova", "xc_api_credential": "_____________________________________", "xc_namespace": "mmabis-automation", "xc_console_host": "f5-bd", "xc_user": "admin", "xc_pass": "Ansible123!", "vcenter_hostname": "{{ ansible_host }}", "vcenter_username": "{{ ansible_env.ANSIBLE_NET_USERNAME }}", "vcenter_password": "{{ ansible_env.ANSIBLE_NET_PASSWORD }}", "vcenter_validate_certs": false, "datacenter_name": "Apex", "cluster_name": "Worlds-Edge", "datastore": "TrueNAS-SSD", "dvs_switch_name": "DSC-DVS", "dns_name_servers": [ "192.168.192.20", "192.168.192.1" ], "dns_name_search": [ "dsc-services.local", "localdomain" ], "ntp_servers": [ "0.pool.ntp.org", "1.pool.ntp.org", "2.pool.ntp.org" ], "domain_fqdn": "dsc-services.local", "DVS_Name": "{{dvs_switch_name}}", "Internal_Network": "DVS-Server-vLan", "External_Network": "DVS-DMZ-vLan", "resource_pool_name": "Lab-XC", "waiting_period": 2, "temp_download_location": "/tmp/xc-ova-download.ova", "xc_ova_builds": [ { "hostname": "xc-automation-rhel-demo", "tmpl_name": "xc-automation-rhel-demo", "admin_password": "Ansible123!", "cluster_name": "xc-automation-cluster-rhel-demo", "dhcp": "no", "external_ip": "172.16.192.170", "external_ip_subnet_prefix": "24", "external_ip_gw": "172.16.192.1", "external_ip_route": "0.0.0.0/0", "internal_ip": "192.168.192.170", "internal_ip_subnet_prefix": "22", "internal_ip_gw": "192.168.192.1", "certified_hw": "vmware-regular-nic-voltmesh", "latitude": "39.51833126", "longitude": "-104.759496962", "build_count": 3, "nic_config": "rhel-multi" } ] } Launching the Code With all of that prework Handled it was as easy as launch the code, there were a few caviats i learned over time when dealing with the atuomation that i wanted to share. Never re-use a cluster name in F5 Distributed Cloud, especially if it was used in a different version of the CE (there were communications issues with the CEs and previous cluster information that was stored in F5 Distributred Cloud Console) The Api Credentials are system level when trying to accept registration or create the token for importing in to the environment. This code is designed to check for "{{ xc-namespace}}-token" if it exists then it will utilize the existing token, if not it will try to create it so you need system level permissions to do this. Build Count should be 3 by default (still needs to be defined) or an ODD number based on recomendations i have heard from our F5 Field. If there are more that i think of ill definatly edit the post and make sure its up-to-date. When launching the code i was able to get the lab to build up correctly multiple times, so please if there is an issue or something i might not have documented well, feel free to let me know and give it a shot for yourself! YouTube Video now on DevCentral Channel Resources https://github.com/f5devcentral/f5-bd-ansible-day0-automation - The Code utilized for this deployment https://github.com/VDI-Tech-Guy/f5-execution-engines - Building Execution Environments with Github and Workflows Conclusion I do hope that this series will help everyone who wants to embrace IaC and if you have any questions feel free to reach out!305Views3likes0CommentsBIG-IP APM with Horizon 7.x HTML5 gets a Hotfix For Updated Code

Technical update on some new hotfixes that were rolled out to resolve some issues with HTML5 connectivity with VMware Horizon 7.1/7.2 with BIG-IP Access Policy Manager. What is VMware Horizon HTML Access? VMware Horizon HTML Access provides the ability for employees to access applications and desktops via web browsers (HTML5 compliant) and without the need for additional plugins or native client installations. This method of access provides advantages to customers who utilize very strict software installation requirements and require access to their internal resources, as well as customers who utilize BYOD based implementations. VMware Horizon HTML Access is an alternative way of accessing company internal resources without the requirement of software installation. What does the Hotfix Do? The Hotfix is designed to allow the newer version of the VMware Horizon HTML Access Clients which were upgraded with new URI information to be accessible via APM. Without this hotfix, customers who upgrade to the Horizon 7.1/7.2 code may experience an issue where HTML5 will not connect to the VDI Resource (blank or grey screen.) The easiest way to determine if you are affected by the issue is within the URL. If you do not see the string f5vdifwd within the URL then you are most likely affected by this issue. Here is an example of a working configuration. Notice the f5vdifwd string in the URL: https://test.test.local/f5vdifwd/vmview/68a5058e-2911-4316-849b-3d55f5b5cafb/portal/webclient/index.html#/desktop The Hotfix Information Details Note that the fixes are incorporated into Hotfixes. F5 recommends to use the Hotfix builds over the iRules listed in the below article. If the iRules are in place when upgrading to a build with the incorporated fix, make sure that the iRule is removed. Version 12.1.2 HF1 Release Notes Version 13.0 HF2 Release Notes 638780-3 Handle 302 redirects for VMware Horizon View HTML5 client Component Access Policy Manager Symptoms Starting from v4.4, Horizon View HTML5 client is using new URI for launching remote sessions, and supports 302 redirect from old URI for backward compatibility. Conditions APM webtop with a VMware View resource assigned. HTML5 client installed on backend is of version 4.4 or later. Impact This fix allows for VMware HTML5 clients v4.4 or later to work properly through APM. Workaround for versions 11.6.x and 12.x priority 2 when HTTP_REQUEST { regexp {(/f5vdifwd/vmview/[0-9a-f\-]{36})/} [HTTP::uri] vmview_html5_prefix dummy } when HTTP_RESPONSE { if { ([HTTP::status] == "302") && ([HTTP::header exists "Location"]) } { if { [info exists vmview_html5_prefix] } { set location [HTTP::header "Location"] set location_path [URI::path $location] if { $location_path starts_with "/portal/" } { set path_index [string first $location_path $location] set new_location [substr $location $path_index] regsub "/portal/" $new_location $vmview_html5_prefix new_location HTTP::header replace "Location" $new_location } unset vmview_html5_prefix } } } Workaround for version 13.0 priority 2 when HTTP_REQUEST { regexp {(/f5vdifwd/vmview/[0-9a-f\-]{36})/} [HTTP::uri] dummy vmview_html5_prefix } when HTTP_RESPONSE { if { ([HTTP::status] == "302") && ([HTTP::header exists "Location"]) } { if { [info exists vmview_html5_prefix] } { set location [HTTP::header "Location"] set location_path [URI::path $location] if { $location_path starts_with "/portal/" } { set path_index [string first $location_path $location] set new_location "$vmview_html5_prefix[substr $location $path_index]" HTTP::header replace "Location" $new_location } unset vmview_html5_prefix } } }517Views0likes1CommentLoad Balancing VMware Identity Manager Integration Guide is now Ready!

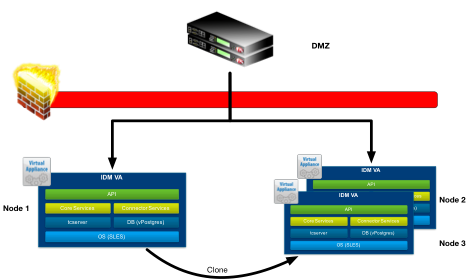

This will be the first in a many of articles being released on new or updated documentation for deploying F5 LTM/APM/DNS with various VMware End-User-Computing based products. I am happy to announce that our first document “Load Balancing VMware Identity Manager” is now available to the public! What is VMware Identity Manager? VMware Identity Manager combines applications and desktops in a single, aggregated workspace. Employees can then access the desktops and applications regardless of where they are based. With fewer management points and flexible access, Identity Manager reduces the complexity of IT administration. What does this Integration Guide Detail? This documentation focuses on deploying F5 LTM with VMware Identity Manager (On-Premise) for a production deployment. Typically, the first VMware Identity Manager node is setup/configured and placed behind the load balancer, this will be the focus of this document. After that’s completed the first node would be shutdown then cloned to the other two nodes for a total of 3 Nodes in the cluster, there are references within the document for other VMware documentation to complete this part. Here is an example from the document that shows how to setup the advanced monitor we use to identify if a single node within the cluster is online or not. This monitor is an example of how F5 does more than just a simple load balancer. Most simple load balancers just check for the HTTPS header or ICMP (Ping) responses to identify if a node is online. F5 worked together with VMware to identify the best way to identify if a node within a cluster is in maintenance mode or offline due to other issues. Create Monitor The next task is to create the Identity Manager Monitor for the BIG-IP Appliance to validate when the webserver is available. Use the following guidance to create a health monitor on the BIG-IP system. Click Local Traffic. Hover over Monitors. Click the Add button (+) to the right of Monitors to create a new health monitor. Monitor Configuration Create a Monitor with the following settings. In the Name field, type a unique name such as WorkspaceOne-Monitor. From the Type list, select HTTPS. In the Send String field, type GET /SAAS/API/1.0/REST/system/health/heartbeat HTTP/1.1\r\nHost: \r\nConnection: Close\r\n\r\n In the Receive String field, type ok$. In the Receive Disable String field, type 404. Click Finished. You can now download the updated step-by-step guide for Load Balancing VMware Identity Manager. https://f5.com/Portals/1/PDF/Partners/f5-big-ip-vmware-workspaceone-integration-guide.pdf You can also read up on setting up a 3-Node Cluster with VMware Identity Manager. https://communities.vmware.com/docs/DOC-33552 and http://pubs.vmware.com/identity-manager-28/index.jsp#com.vmware.wsp-install_28/GUID-A29C51E5-6FF5-4F7F-8FC2-1A0F687F6DC5.html Special Thanks to Dean Flaming, and the VMware Identity Management team for all of their assistance putting this together!1.4KViews0likes2CommentsInside Look - PCoIP Proxy for VMware Horizon View

I sit down with F5 Solution Architect Paul Pindell to get an inside look at BIG-IP's native support for VMware's PCoIP protocol. He reviews the architecture, business value and gives a great demo on how to configure BIG-IP. BIG-IP APM offers full proxy support for PC-over-IP (PCoIP), a leading virtual desktop infrastructure (VDI) protocol. F5 is the first to provide this functionality which allows organizations to simplify their VMware Horizon View architectures. Combining PCoIP proxy with the power of the BIG-IP platform delivers hardened security and increased scalability for end-user computing. In addition to PCoIP, F5 supports a number of other VDI solutions, giving customers flexibility in designing and deploying their network infrastructure. ps Related: F5 Friday: Simple, Scalable and Secure PCoIP for VMware Horizon View Solutions for VMware applications F5's YouTube Channel In 5 Minutes or Less Series (24 videos – over 2 hours of In 5 Fun) Inside Look Series Life@F5 Series Technorati Tags: vdi,PCoIP,VMware,Access,Applications,Infrastructure,Performance,Security,Virtualization,silva,video,inside look,big-ip,apm Connect with Peter: Connect with F5:331Views0likes0CommentsHorizon Blast Extreme UDP with BEAT Support Functionality in BIG-IP Access Manager 14.0!

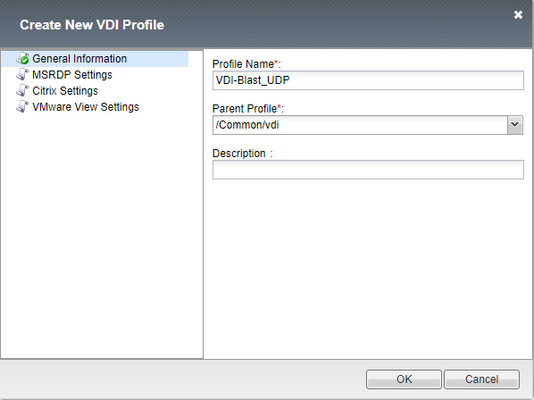

Hey All, Just wanted to provide an update on new features that were added to BIG-IP Access Manager (Formerly APM) 14.0 for VMware Horizon. Listed below are the new features that were added into Access Manager for VMware Workspace ONE and VMware Horizon. APM supports Blast Extreme protocol over TCP and UDP and also supports the Blast Extreme Adaptive Transport (BEAT) for Desktops and Applications. APM supports access to VMware Horizon desktops and applications using VMware Workspace ONE as an IDP for more information on this check out the integration guide at https://f5.com/Portals/1/PDF/Partners/apm-proxy-with-workspace-one-integration-guide.pdf What is the VMware Horizon Blast Extreme TCP/UDP with BEAT Feature? Since the release of Blast Extreme in Horizon 7, F5 has supported the TCP functionality of the Blast code allowing for the VMware Horizon native client and HTML5 client's to connect to desktops and apps. BIG-IP (14.0) now supports the UDP and BEAT functionality of the Blast Extreme code. What is BEAT? BEAT or Blast Extreme Adaptive Transport allows the switching between TCP and UDP of the Blast Extreme Transport based on the connected clients conditions. For example, when a client is connected over a mobile network sometimes the connectivity is unstable (packet loss and/or high latency), with a typical TCP connection packet loss will retransmit the packet over and over again creating lag from a user's desktop or app perspective in Horizon. BEAT was designed to adapt to these types of connections and will detect those packets being lost and adjust the protocol from the connected client from TCP to UDP to allow the dropped packets to be lost and continue moving forward allowing the user to have a more seamless desktop experience. BEAT also has the ability to switch from UDP to TCP depending on the clients connectivity. Is there an iAPP to Enable Blast UDP? Currently there is not an iAPP for this functionality and the existing iAPP will only create the TCP functionality for the Blast Extreme Protocol. F5 intends to release a build soon to resolve this issue, this article is being posted to help customers manually create the Virtual Server to allow for the Blast Extreme Functionality prior to the iAPP fix. Here is the information needed to implement the Blast UDP functionality which will enable BEAT. NOTE: This will need to be removed when the iAPP is upgraded later to allow for the feature/function Create a VDI Profile Creating the VDI Profile for Blast Extreme Navigate to Access --> Connectivity/VPN --> VDI/RDP --> VDI Profiles. Create a new profile Name it whatever you want Change Parent Profile to “/Common/vdi” In VMware View Settings change from PCoIP to Blast Extreme Create a Virtual IP for the Blast Extreme UDP Port Provide a Unique Name Match the Destination Address with existing Horizon APM Deployment Service Port: 8443 Source Address Translation: Automap VDI Profile: Select previously created VDI Profile Click Finished to Create the VIP Validation/Testing Once completed you can test the connection, I recommend using the VMware Horizon Performance Tracker as you can see the BEAT protocol in action changing from TCP to UDP.3.8KViews2likes3CommentsVMware Fusion Custom Networking for BIG-IP VE Lab

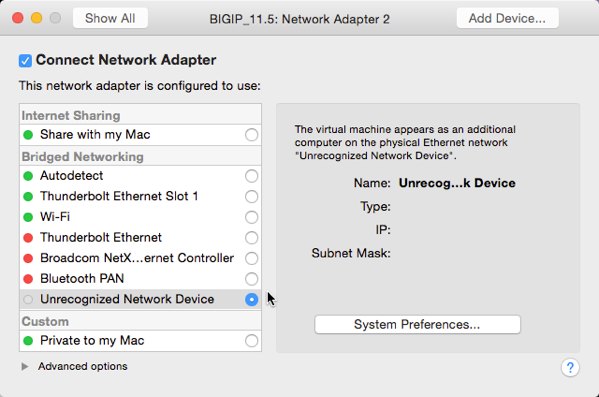

I've used VMware workstation on Windows and Fusion on OS X for quite some time and I'm a big fan of both platforms. That said, the lack of a network settings editor built in to Fusion (I understand it's now available in the Pro version of Fusion) can be more than a little frustrating, particularly if you want a custom experience. Why custom? Well, you just might want to do more with your BIG-IP VE Lab license than just connect to it and test iRules, and that might require more than just a nic or two. Custom network capabilities enable you to mock up complete environments rapidly. If you are importing an existing VE instance (as I did) and you have not yet set up your custom networking, you will see that the nic is unrecognized if it was previously configured for additional networking as show in the figure below. If you are installing a new VE instance, set up your networking first. This is accomplished by editing the networking file located in your /Library tree. You can edit this file by typing this command: sudo vi /Library/Preferences/Vmware\ Fusion/networking Once you are in edit mode, you can insert the text below between the VNET_1 and VNET_8 sections. answer VNET_2_HOSTONLY_NETMASK 255.255.255.0 answer VNET_2_HOSTONLY_SUBNET 192.168.102.0 answer VNET_2_VIRTUAL_ADAPTER yes answer VNET_2_VIRTUAL_ADAPTER_ADDR 192.168.102.1 answer VNET_3_HOSTONLY_NETMASK 255.255.255.0 answer VNET_3_HOSTONLY_SUBNET 192.168.103.0 answer VNET_3_VIRTUAL_ADAPTER yes answer VNET_3_VIRTUAL_ADAPTER_ADDR 192.168.103.1 answer VNET_4_HOSTONLY_NETMASK 255.255.255.0 answer VNET_4_HOSTONLY_SUBNET 192.168.104.0 answer VNET_4_VIRTUAL_ADAPTER yes answer VNET_4_VIRTUAL_ADAPTER_ADDR 192.168.104.1 answer VNET_5_HOSTONLY_NETMASK 255.255.255.0 answer VNET_5_HOSTONLY_SUBNET 192.168.105.0 answer VNET_5_VIRTUAL_ADAPTER yes answer VNET_5_VIRTUAL_ADAPTER_ADDR 192.168.105.1 answer VNET_6_HOSTONLY_NETMASK 255.255.255.0 answer VNET_6_HOSTONLY_SUBNET 192.168.106.0 answer VNET_6_VIRTUAL_ADAPTER yes answer VNET_6_VIRTUAL_ADAPTER_ADDR 192.168.106.1 answer VNET_7_HOSTONLY_NETMASK 255.255.255.0 answer VNET_7_HOSTONLY_SUBNET 192.168.107.0 answer VNET_7_VIRTUAL_ADAPTER yes answer VNET_7_VIRTUAL_ADAPTER_ADDR 192.168.107.1 You don’t have to add as many vnics as I did, and you can adjust the IP space and enable DHCP (check the VNET_1 config for details) in the networks as well if desired. Save and restart Fusion and you should be good to go. Looking back at my VE instance NIC2 config, it properly placed my nic into vmnet2 (same as it was pre-import,) but I now have the flexibility to change to any of these networks.978Views0likes4CommentsVMware Horizon and F5 iAPP Deployments Backed by Ansible Automation

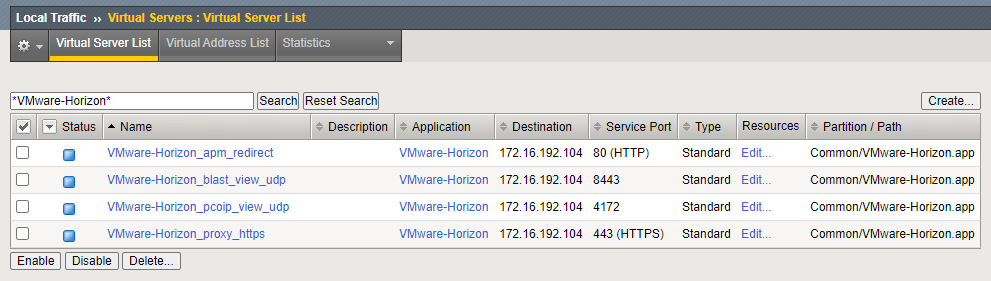

The Intro: A little over a year ago I knew barely anything about automation, zero about ansible, and didn't even think it would be something so tied to my life like it is now. I spend all my moments trying to think about how I can make Automation easier in my life, and being in Business Development I spend a lot of time testing F5 solutions and integrations between vendors (specifically between F5 and VMware as well as F5 and RedHat Ansible). I figured why not bring them a little closer together? It takes forever to build Labs and setup environments, and with automation I can do this in mere minutes compared to the hours it use to take (we are talking fresh builds, clean environments). I plan on sharing more about more of my VMware and Ansible automation integrations down the chain (like Horizon labs that can be built from scratch and ready to test in 30 minutes or less). But I wanted to start out with something that I get a lot of questions about:is it possible to automate iApp Deployments? Specifically the VMware Horizon iApp? The answer is YOU CAN NOW! grant you this like all automation is a work in progress. My suggestion is if you have a use case you want to build using what I have started with I encourage it!! TAKE, FORK and Expand!!!! The Code: All of the code I am using is completely accessible via the F5 DevCentral Git Repository and feel free to use it! What does it do? Well, if you are an F5 Guru then you might think it looks similar to how our AS3 code works, if you aren't a Guru its basically taking one set of variables and sending off a single command to the F5 to build the Application (I tell it the things that make it work, and how I want it deployed and it does all the work for me). Keep in mind this isn't using F5 AS3 code, it just mimics the same methods bytaking a JSON declaration of how I want things to be and the F5 does all of the imperative commands for me. --- - name: Build JSON payload ansible.builtin.template: src=f5.horizon.{{deployment_type |lower }}.j2 dest=/tmp/f5.horizon.json - name: Deploy F5 Horizon iApp f5networks.f5_modules.bigip_iapp_service: #Using Collections if not use - bigip_iapp_service: name: "VMware-Horizon" template: "{{iapp_template_name}}" parameters: "{{ lookup('template', '/tmp/f5.horizon.json') }}" provider: server: "{{f5_ip}}" user: "{{f5_user}}" password: "{{f5_pass}}" validate_certs: no delegate_to: localhost All of this code can be found at - https://github.com/f5devcentral/f5-bd-horizon-iapp-deploy/ Deployments: Using the F5 iApp for Horizon provided many options of deployment but they were all categorized into 3 buckets F5 APM with VMware Horizon - Where the F5 acts as the Gateway for all VMware Horizon Connections (Proxying PCoIP/Blast) F5 LTM with VMware Horizon - Internal Connections to an environment from a LAN and being able to secure and load balance Connection Servers F5 LTM with VMware Unified Access Gateway - Using the F5 to load balance the VMware Unified Access Gateways (UAGs) and letting the UAGs proxy the connections. The deployments offer the ability to utilize pre-imported certificates, set the Virtual IP, add additional Connection Servers, Create the iRule for internal connections (origin header check) and much more. All of this is dependent on your deployment and the way you need it setup. The current code doesn't import in the iApp Template nor the certificates, this could be done with other code but currently is not part of this code. All three of these deployment models are considered and part of the code and how its deployed is based on the variables file "{{code_directory}}/vars/horizon_iapp_vars.yml" as shown below. Keep in mind this is using clear text (i.e. username/password for AD) for some variables you can add other ways of securing your passwords like an Ansible VAULT. #F5 Authentication f5_ip: 192.168.1.10 f5_user: admin f5_pass: "my_password" f5_admin_port: 443 #All Deployment Types deployment_type: "apm" #option can be APM, LTM or UAG #iApp Variables iapp_vip_address: "172.16.192.100" iapp_template_name: "f5.vmware_view.v1.5.9" #SSL Info iapp_ssl_cert: "/Common/Wildcard-2022" # If want to use F5 Default Cert for Testing use "/Common/default.crt" iapp_ssl_key: "/Common/Wildcard-2022" # If want to use F5 Default Cert for Testing use "/Common/default.key" iapp_ssl_chain: "/#do_not_use#" #Horizon Info iapp_horizon_fqdn: "horizon.mycorp.com" iapp_horizon_netbios: "My-Corp" iapp_horizon_domainname: "My-Corp.com" iapp_horizon_nat_addresss: "" #enter NAT address or leave empty for none # LTM Deployment Type iapp_irule_origin: - "/Common/Horizon-Origin-Header" # APM and LTM Deployment Types iapp_horizon_connection_servers: - { ip: "192.168.1.50", port: "443" } # to add Connection Servers just add additional line - { ip: "192.168.1.51", port: "443" } #APM Deployment Type iapp_active_directory_username: "my_ad_user" iapp_active_directory_password: "my_ad_password" iapp_active_directory_password_encrypted: "no" # This is still being validated but requires the encrypted password from the BIG-IP iapp_active_directory_servers: - { name: "ad_server_1.mycorp.com", ip: "192.168.1.20" } # to add Active Directory Servers just add additional lines - { name: "ad_server_2.mycorp.com", ip: "192.168.1.21" } # UAG Deployment Type iapp_horizon_uag_servers: - { ip: "192.168.199.50", port: "443" } # to add UAG Servers Just add additional lines - { ip: "192.168.199.51", port: "443" } How do the Variables integrate with the Templates? The templates are JSON based code which Ansible will inject the variables into them depending on the deployment method called. This makes it easier to templates to specific deployments because we don't hard code specific values that aren't necessary or are part of the default deployments. Advanced Deployments would require modification of the JSON code to apply specialized settings that aren't apart of the default. If you want to see more about the templates for each operation (APM/LTM/UAG) check out the JSON Code at the link below: https://github.com/f5devcentral/f5-bd-horizon-iapp-deploy/tree/main/roles/ansible-deploy-iapp/templates The Results: Within seconds I can deploy, configure and make changes to my deployments or even change my deployment type. Could I do this in the GUI? Absolutely but the point is to Automate ALL THE THINGS, and being able to integrate this with solutions like Lab in a box (built from scratch including the F5) saves massive amounts of time. Example of a VMware Horizon iApp Deployment with F5 APM done in ~12 Seconds [root@Elysium f5-bd-horizon-iapp-deploy]# time ansible-playbook horizon_iapp_deploy.yaml PLAY [localhost] ******************************************************************************************************************************************************************** TASK [bypass-variables : ansible.builtin.stat] ************************************************************************************************************************************** ok: [localhost] TASK [bypass-variables : ansible.builtin.include_vars] ****************************************************************************************************************************** ok: [localhost] TASK [create-irule : Create F5 iRule] *********************************************************************************************************************************************** skipping: [localhost] TASK [ansible-deploy-iapp : Build JSON payload] ************************************************************************************************************************************* ok: [localhost] TASK [ansible-deploy-iapp : Deploy F5 Horizon iApp] ********************************************************************************************************************************* changed: [localhost] PLAY RECAP ************************************************************************************************************************************************************************** localhost : ok=4 changed=1 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0 real 0m11.954s user 0m6.114s sys 0m0.542s Links: All of this code can be found at - https://github.com/f5devcentral/f5-bd-horizon-iapp-deploy/1.2KViews0likes0CommentsLoad Balancing VMware Unified Access Gateway Integration Guide

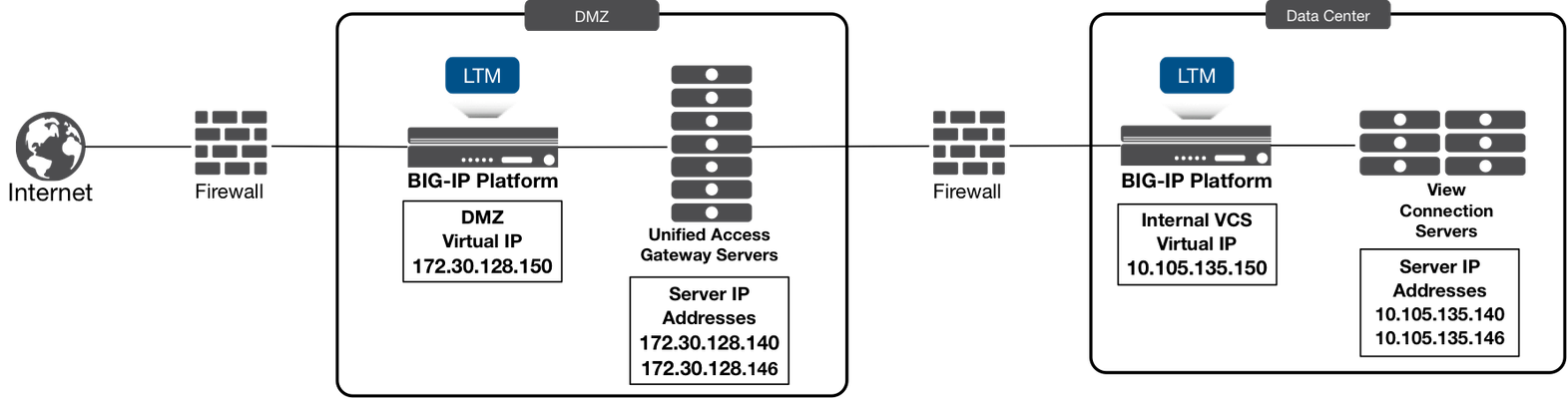

Hey All, here is the next document in the series for Integration/Deployment guides for F5 with VMware Products. This guide had a lot of requests. I am happy to announce that the next document “Load Balancing VMware Unified Access Gateway” is now available to the public! What is VMware Unified Access Gateway? VMware Unified Access Gateway (UAG), formerly known as VMware Access Point is an appliance that is typically installed in the demilitarized zone (DMZ). UAG is designed to provide safe and secure access to desktop and application resources for remote access. UAG simplifies gateway access and provides tunneled and proxied resources for the following VMware product suites. What does this Integration Guide Detail? This documentation focuses on deploying F5 BIG-IP LTM with VMware Unified Access Gateway (UAG) for a production deployment. When Unified Access Gateway is deployed in a production scenario (n+1) it requires a load balancer sitting in front (for UAG Servers scalability) and behind it (for Connection Server load balancing). The below picture is an example of the implementation detailed in this guide, we will specifically focus on the load balancer sitting in front (for UAG Server scalability). In typical deployment scenarios the Load balancer for the connection server would have already been deployed prior to the deployment of the UAG Servers, this path is recommended so that UAG can leverage the Load balancer in front of the connection servers for the UAG's Configuration/Setup. Here is an example from the document that shows how to setup the advanced monitor we use to identify if a single node within the cluster is online or not. This monitor is an example of how F5 does more than just a simple load balancing monitor. Most simple load balancers just check for the HTTPS header or ICMP (Ping) responses to identify if a node is online. F5 worked together with VMware to identify the best way to identify if a node within a cluster is in maintenance mode (Quiesce Mode) or offline due to other issues. As you can also see we have more than 1 monitor to identify the node is online, if one of either of the monitors fails then the system is taken offline. Both have to be online for the node to be considered "OK". HTTPS – Second Monitor This monitor is used to identify when the UAG Node is in Quiesce Mode (Maintenance) Create a simple HTTPS monitor using the following guidance. On the Main tab, click Local Traffic > Monitors > Create. In the Name field, type a unique name (different from the first). From the Type list, select HTTPS. Ensure the Parent Monitor is https. In the Interval field, type 30. In the Timeout field, type 91. In the Send String field, type (or copy and paste) GET /favicon.ico HTTP/1.1\r\nHost: \r\nConnection: Close\r\n\r\n In the Receive String field, type 200 in the Receive Disable String field, type 503 Leave all other settings at the default and then click Finished. You can now download the updated step-by-step guide for Load Balancing VMware Unified Access Gateway at https://www.f5.com/pdf/solution-center/load-balancing-vmware-unified-access-gateway-servers-deployment-guide.pdf Special Thanks to Mark Benson, and the VMware Unified Access Gateway Server development team for all of their assistance putting this together!3.5KViews0likes2CommentsHow is SDN disrupting the way businesses develop technology?

You must have read so much about software-defined networking (SDN) by now that you probably think you know it inside and out. However, such a nascent industry is constantly evolving and there are always new aspects to discover and learn about. While much of the focus on SDN has focused on the technological benefits it brings, potential challenges are beginning to trouble some SDN watchers. While many businesses acknowledge that the benefits of SDN are too big to ignore, there are challenges to overcome, particularly with the cultural changes that it brings. In fact, according to attendees at the Open Networking Summit (ONS) recently the cultural changes required to embrace SDN outweigh the technological challenges. One example, outlined in this TechTarget piece, is that the (metaphorical) wall separating network operators and software developers needs to be torn down; network operators need coding skills and software developers will need to be able to program networking services into their applications. That’s because SDN represents a huge disruption to how organisations develop technology. With SDN, the speed of service provisioning is dramatically increased; provisioning networks becomes like setting up a VM... a few clicks of the button and you’re done. This centralised network provision means the networking element of development is no longer a bottleneck; it’s ready and available right when it’s needed. There’s another element to consider when it comes to SDN, tech development and its culture. Much of what drives software-defined networking is open source, and dealing with that is something many businesses may not have a lot of experience with. Using open source SDN technologies means a company will have to contribute something back to the community - that’s how open source works. But for some that may prove to be a bit of an issue: some SDN users such as banks or telecoms companies may feel protective of their technology and not want is source code to be released to the world. But that is the reality of the open source SDN market, so it is something companies will have to think carefully about. Are the benefits of SDN for tech development worth going down the open source route? That’s a question only the companies themselves can answer. Software-defined networking represents a huge disruption to the way businesses develop technology. It makes things faster, easier and more convenient during the process and from a management and scalability point of view going forward. There will be challenges - there always are when disruption is on the agenda - but if they can be overcome SDN could well usher in a new era of technological development.986Views0likes6CommentsVMware Cloud for AWS - BIG-IP in Single-Site, Hybrid, and Multi-Cloud Deployments

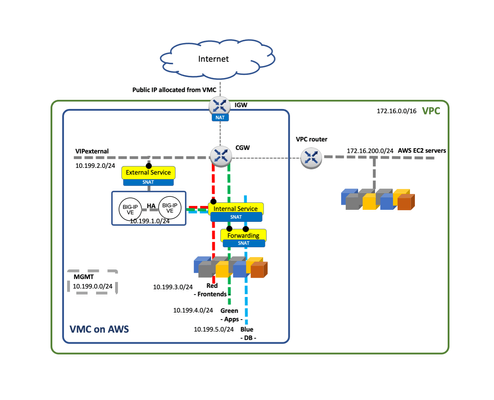

Introduction This is article 2 of 2. The aim is to provide useful information for planning a VMC/multi-cloud deployment, for example when creating an HLD (High Level Design) document. For information about VMC and relevant aspects for BIG-IP please see the previous article VMware Cloud for AWS - Networking and High Availability. VMC uses NSX-T for networking but currently AWS only allows a single Tier-1 Gateway hence limiting the networking topologies possible. In this blog post we describe a suggested topology for BIG-IP in VMC for AWS. This baseline arrangement can be used in the multi-cloud sample topology exposed. A mention to VMware’s HCX migration tool is also done. BIG-IP in a single site Out of the 4 topologies described In the F5 BIG-IP deployment guide for NSX-T the customers are currently constrained to Topology D which uses SNAT by default. This topology is shown in the next diagram. In this sample topology, we create a typical 3-tier architecture with Frontend (External Service), Application (Internal Service) and Database tiers. Notice that the Database Tier is configured as “Disconnected” to provide an additional layer of secure by means of controlling the access through a VIP in the BIG-IP. The above topology can be expanded with multiple BIG-IP Scale-N clusters. This would allow isolation between the different Business Units or departments, each one with their own BIG-IP Scale-N cluster. Out of scope of this blog but worth to remind is that in these multi-cluster BIG-IP deployments (in a single or multiple sites) BIG-IQ can be used for global visibility across sites and centralized management. Using EC2 workloads From the point of view of the BIP-IPs, VMC is just another routing environment where it can also access EC2 workloads. These workloads can be dynamically incorporated in BIG-IP’s configuration by means of using AS3’s Service Discovery feature. Moreover, reachability of the VMs is the same either from VMC to VPC or vice versa. The same applies to the Internet access. This opens the following dilemmas: Where to place the BIG-IPs? Where to place the Internet Gateway? There is no definitive answer. We can choose whether we want each functionality in the AWS VPC or in the VMC side. This is shown in the next figure. The decision should consider the following aspects: At time of this writing, using an AWS IGW instead of an IGW via VMC has the possibility of using ELBs which provides Advanced Shield capabilities. The cost will depend where we have more traffic and where we have more compute resources. Using HCX VMware's HCX covers several migration-related use cases including Disaster Recovery. HCX's Network Extension capability permits keeping the same IP and MAC addresses during a VM migration. This minimizes service disruption and is transparent to all devices including BIG-IP. Furthermore, HCX doesn’t mandate how the services are exposed externally therefore GSLB is always a valid option and will provide greater flexibility compared to a plain routing option. BIG-IP in Multi-cloud Multi-cloud allows for many use cases, as a consequence, many designs are possible. Ultimately the design will be highly dependent on the applications and on the databases, which most of the times require replication across sites. From the point of BIG-IP there are very few restrictions. Next we will describe two multi-cloud scenarios: A hybrid design focused in local data retention implemented with a single site plus cloud bursting. A generic multi-cloud design that can be applied to any public cloud or private data centers. Single site with cloud bursting design The topology to be described next is suitable for smaller deployments or when data must be stored on-premises, usually because of data retention policies or regulations. This can be observed in the next figure where the DB Tier is not stretched to the Public Cloud. In this architecture the On-premises data center is stretched to a public cloud when load conditions require increasing the compute needs. In this scenario Internet access is kept in the On-premises data center. It requires the use of a high performance Direct Connect link with low latency. This is usually within the metropolitan area of the On-premises facility. This Direct Connect circuit needs to be established once and its capacity increased ahead of the peak periods. Some housing vendors allow to change circuit’s capacity programmatically. When compute changes dynamically, it is a perfect fit for F5’s Service Discovery feature of AS3, automatically populating the pools with the added or removed computing instances. Please check the clouddocs.f5.com site for this and other automation options. Generic multi-Cloud design In general, F5 recommends Global Server Load Balancing (GSLB) for multi-cloud because it has the following benefits: cross-cloud vendor, name based/high degree of control, stickiness and IP intelligence.GSLB is available by F5 in two form factors: Software as a Service (SaaS) with F5 Cloud Services’ DNS LB service and Self-managed with F5 BIG-IP’s DNS module. Designs depend on the applications and on databases. Inter-site dependencies play a crucial role. This guide recommends following the next design principles to minimize cost and maximize reliability while keeping simplicity in mind: Typically, ADCs like BIG-IP deal with Frontend-tier and App-tier servers which should not have to talk with peers in other sites. These tiers have the most throughput and latency demands so inter-site communication should be avoided. Otherwise, this could incur in uneven performance and increased and unnecessary costs. Identify strictly necessary inter-site dependencies. The typical case is DB replication which has much less throughput demands. Also, latency is a lesser issue because replication often happens asynchronously. There are other very relevant sources of inter-site traffic such as Automation, VM migration and data-store replication (for example a repository of images). VMware’s HCX traffic fits in this category. The first two items in this list deal with traffic that is generated upon client requests (blue arrows in the figure below). On the other hand, the third item is a new category of traffic (orange arrows) that is not expected to have dependencies when handling an ongoing customer request. Another characteristic of this traffic is that its traffic demands will greatly depend on frequency of updates in the applications. Simpler sites are easier to manage, scale, and replicate. GSLB allows for distribution of workloads based on a site’s or a service’s load and capacity so it is perfectly fine to have differently sized data centers. The most important attribute is to have them architecturally equal. Automations that are cross-cloud vendor capable are advised. Using BIG-IP DNS and following the above guidelines we can create a cross-cloud vendor solution using GSLB. This is shown in the next figure. Probably the most remarkable aspect of the diagram are the network dependencies and demands which drive the design. In this diagram Inter-site dependency is reduced to the minimum, typically DB replication only. We can also see that there is additional inter-site traffic like the BIG-IP DNS iQuery (used for service discovery and health probing) but this traffic is different in nature because it is failure tolerant. In the design above, the DNS functionality is implemented in a standalone BIG-IPs because redundancy is accomplished by having an independent BIG-IP DNS at each site. Having this BIG-IP DNS separated from the BIG-IP Scale-N cluster that handles client traffic gives clarity in the diagram and more relevantly sets a clear demarcation of functions. If desired, the BIG-IP DNS functionality can be consolidated in the BIG-IP Scale-N cluster at each site. At extract cost, BIG-IP DNS could be placed in Internet exchanges. This allows: To be closer to the clients. This only slightly improves DNS performance since client’s local DNS resolvers usually reply from their DNS cache. To have a closer view to client’s network performance and reachability to the clouds. This is very relevant. At the end of the day all designs have their PROs and CONs and a balancing act has to be done. In any case simplicity should always be priority. With respect to this, BIG-IP DNS has very little constains and greatly simplifies any existing deployment by having automatic service discovery Conclusion BIG-IP integrates in VMC likewise in NSX-T by using routing. In the case of VMC on AWS at present there are limitations which inhibit using the same topologies than on the private clouds. BIG-IQ can be leveraged to simplify the management of multiple BIG-IPs in the same or multiple sites. GSLB is king for multi-cloud deployments. It is cross cloud vendor and provides greater flexibility and functionality over plain routed options. Multi-cloud is a wide topic and we refer to the F5 BIG-IP deployment guide for NSX-T for more detailed discussion on the topics described in this blog.985Views1like1Comment